Alexa records US couple’s private conversation then sends it to random contact

A US couple’s private conversation was recorded by Amazon’s Alexa and sent to an acquaintance without their knowledge two weeks ago. Amazon has explained why the conversation was picked up by the voice-controlled Echo speaker and send it to the husband’s employee 176 miles away.

As Portland resident Danielle (last name withheld) told Kiro7.com, the family had Amazon Echo in every room up until a couple of weeks ago when a phone call changed her mind about Alexa. She had been talking to her husband when one of his employees called to warn them about a possible hacking.

“The person on the other line said, ‘unplug your Alexa devices now,’” she said. “’You’re being hacked.’” That person was calling from Seattle.

“We unplugged all of them and he proceeded to tell us that he had received audio files of recordings from inside our house. At first, my husband was like, ‘No you didn’t!’ And the (recipient of the message) said, ‘You sat there talking about hardwood floors.’ And we said, ‘Oh gosh, you really did hear us.’”

Danielle said she listened to the conversation when it was sent back to her. She felt “invaded,” describing the Alexa blunder as “a total privacy invasion.” She said she would never plug the devices anymore.

She called Amazon repeatedly. An Alexa engineer has then investigated the claim.

“They said, ‘Our engineers went through your logs, and they saw exactly what you told us, they saw exactly what you said happened, and we’re sorry,’” Danielle recounted. “He apologised like 15 times in a matter of 30 minutes and he said we really appreciate you bringing this to our attention, this is something we need to fix!”

The engineer did not explain why it happened, though. He just said that the device guessed what they were saying. She said the device did not advise her that it was preparing to send the conversation to someone in her husband’s contact list.

Amazon offered to “de-provision” the Alexa communications for Danielle so her family could keep using its Smart Home Features. She instead wanted to return the products for a refund, which Amazon was unwilling to do.

Amazon, in a statement provided to the media, described the event as a rare occurrence. “Echo woke up due to a word in background conversation sounding like ‘Alexa.’ Then, the subsequent conversation has heard as a ‘send message’ request. At which point, Alexa said out loud, ‘To whom?’ At which point, the background conversation was interpreted as a name in the customer’s contact list.

“Alexa then asked out loud, ‘[Contact name], right?’ Alexa then interpreted background conversation as ‘right.’ As unlikely as this string of events is, we are evaluating options to make this case even less likely.”

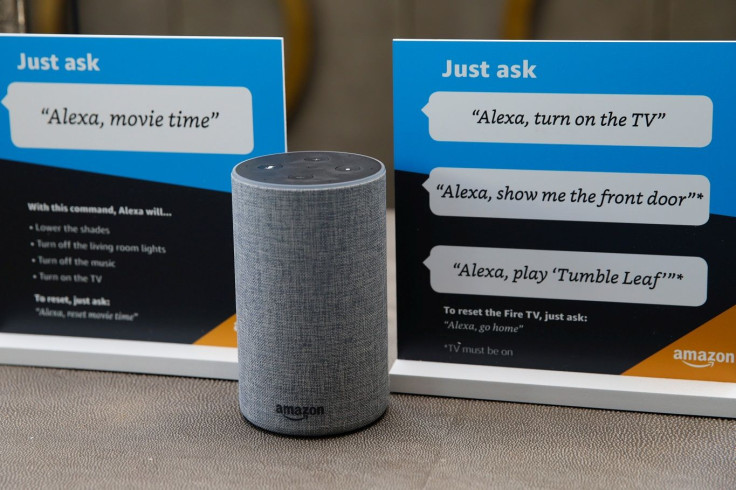

Alexa is a virtual assistant built into Amazon Echo smart speakers. It is activated by users saying the word “Alexa” and then followed by a command.

This isn’t the first time Alexa was caught in a shady practice. In March, the virtual assistant creeped out several households when it suddenly laughed at people at random times. Several users have reported that Alexa creepily laughed at them for no apparent reason.

Amazon explained the incidents similar to how it explained the conversation-sending incident above. It told the media that Alexa mistakenly hear the phrase, ‘Alexa, laugh,’” and therefore Aleca laughed.

“We are changing that phrase to be ‘Alexa, can you laugh?’ which is less likely to have false positives, and we are disabling the short utterance ‘Alexa, laugh.”

Amazon also said it was changing the VA’s response from simply laughter to, “Sure, I can laugh,” only then would it be followed by laughter.